All about KUBERNETES…

What is Kubernetes?

Kubernetes is a portable, extensible, open-source platform for managing containerized workloads and services, that facilitates both declarative configuration and automation. It has a large, rapidly growing ecosystem. Kubernetes services, support, and tools are widely available.

The name Kubernetes originates from Greek, meaning helmsman or pilot. Google open-sourced the Kubernetes project in 2014.

Going back in time:

Let’s take a look at why Kubernetes is so useful by going back in time.

Traditional deployment era:

Early on, organizations ran applications on physical servers. There was no way to define resource boundaries for applications in a physical server, and this caused resource allocation issues. For example, if multiple applications run on a physical server, there can be instances where one application would take up most of the resources, and as a result, the other applications would underperform. A solution for this would be to run each application on a different physical server. But this did not scale as resources were underutilized, and it was expensive for organizations to maintain many physical servers.

Virtualized deployment era:

As a solution, virtualization was introduced. It allows you to run multiple Virtual Machines (VMs) on a single physical server’s CPU. Virtualization allows applications to be isolated between VMs and provides a level of security as the information of one application cannot be freely accessed by another application.

Virtualization allows better utilization of resources in a physical server and allows better scalability because an application can be added or updated easily, reduces hardware costs, and much more. With virtualization you can present a set of physical resources as a cluster of disposable virtual machines.

Each VM is a full machine running all the components, including its own operating system, on top of the virtualized hardware.

Container deployment era:

Containers are similar to VMs, but they have relaxed isolation properties to share the Operating System (OS) among the applications. Therefore, containers are considered lightweight. Similar to a VM, a container has its own filesystem, share of CPU, memory, process space, and more. As they are decoupled from the underlying infrastructure, they are portable across clouds and OS distributions.

Containers have become popular because they provide extra benefits, such as:

Agile application creation and deployment:

increased ease and efficiency of container image creation compared to VM image use.

Continuous development, integration, and deployment:

provides for reliable and frequent container image build and deployment with quick and easy rollbacks (due to image immutability).

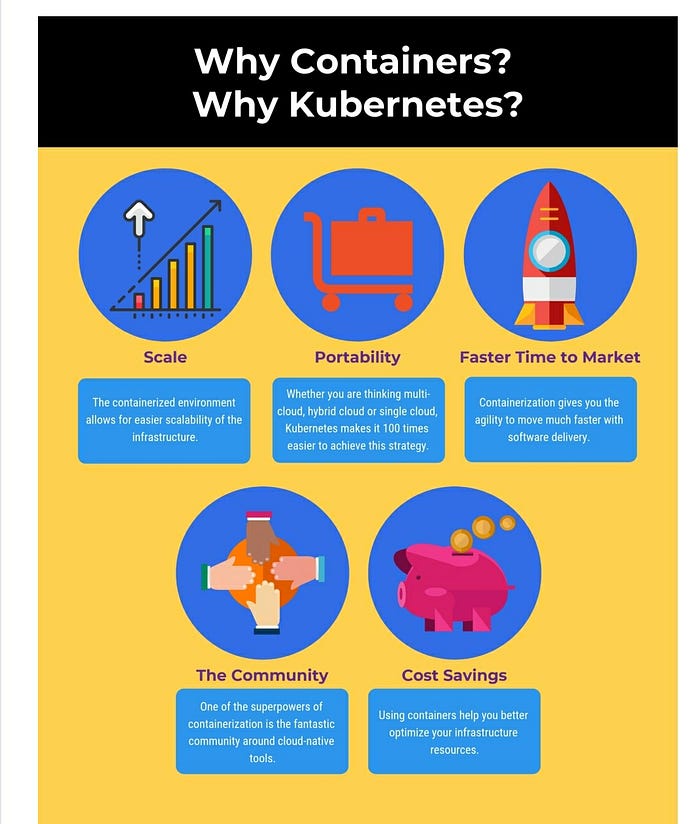

Why you need Kubernetes and what it can do??

Containers are a good way to bundle and run your applications. In a production environment, you need to manage the containers that run the applications and ensure that there is no downtime. For example, if a container goes down, another container needs to start. Wouldn’t it be easier if this behavior was handled by a system?

That’s how Kubernetes comes to the rescue! Kubernetes provides you with a framework to run distributed systems resiliently. It takes care of scaling and failover for your application, provides deployment patterns, and more. For example, Kubernetes can easily manage a canary deployment for your system.

The Docker adoption is still growing exponentially as more and more companies have started using it in production. It is important to use an orchestration platform to scale and manage your containers.

Imagine a situation where you have been using Docker for a little while, and have deployed on a few different servers. Your application starts getting massive traffic, and you need to scale up fast; how will you go from 3 servers to 40 servers that you may require? And how will you decide which container should go where? How would you monitor all these containers and make sure they are restarted if they die? This is where Kubernetes comes in.

Kubernetes provides you with:

Service discovery and load balancing

Kubernetes can expose a container using the DNS name or using their own IP address. If traffic to a container is high, Kubernetes is able to load balance and distribute the network traffic so that the deployment is stable.

Storage orchestration

Kubernetes allows you to automatically mount a storage system of your choice, such as local storages, public cloud providers, and more.

Use case of kubernetes :

Pinterest’s kubernetes story:

Pinterest was born on the cloud — running on AWS since day one in 2010 — but even cloud native companies can experience some growing pains.

Challenge:

Since its launch, Pinterest has become a household name, with more than 200 million active monthly users and 100 billion objects saved. Underneath the hood, there are 1,000 microservices running and hundreds of thousands of data jobs.

With such growth came layers of infrastructure and diverse set-up tools and platforms for the different workloads, resulting in an inconsistent and complex end-to-end developer experience, and ultimately less velocity to get to production. So in 2016, the company launched a roadmap toward a new compute platform, led by the vision of having the fastest path from an idea to production, without making engineers worry about the underlying infrastructure.

Solutions:

The first phase involved moving to Docker. “Pinterest has been heavily running on virtual machines, on EC2 instances directly, for the longest time,” says Micheal Benedict, Product Manager for the Cloud and the Data Infrastructure Group. “To solve the problem around packaging software and not make engineers own portions of the fleet and those kinds of challenges, we standardized the packaging mechanism and then moved that to the container on top of the VM. Not many drastic changes. We didn’t want to boil the ocean at that point.”

The first service that was migrated was the monolith API fleet that powers most of Pinterest. At the same time, Benedict’s infrastructure governance team built chargeback and capacity planning systems to analyze how the company uses its virtual machines on AWS.

“It became clear that running on VMs is just not sustainable with what we’re doing,” says Benedict.

A lot of resources were underutilized. There were efficiency efforts, which worked fine at a certain scale, but now you have to move to a more decentralized way of managing that. So orchestration was something we thought could help solve that piece.”

That led to the second phase of the roadmap. In July 2017, after an eight-week evaluation period, the team chose KUBERNETES over other orchestration platforms. “Kubernetes lacked certain things at the time — for example, we wanted Spark on Kubernetes,” says Benedict. “But we realized that the dev cycles we would put in to even try building that is well worth the outcome, both for Pinterest as well as the community. We’ve been in those conversations in the Big Data SIG. We realized that by the time we get to productionizing many of those things, we’ll be able to leverage what the community is doing.”

Though Kubernetes lacked certain things we wanted, we realized that by the time we get to productionizing many of those things, we’ll be able to leverage what the community is doing.” — MICHEAL BENEDICT, PRODUCT MANAGER FOR THE CLOUD AND THE DATA INFRASTRUCTURE GROUP AT PINTEREST

Impact:

“By moving to Kubernetes the team was able to build on-demand scaling and new failover policies, in addition to simplifying the overall deployment and management of a complicated piece of infrastructure such as Jenkins,” says Micheal Benedict, Product Manager for the Cloud and the Data Infrastructure Group at Pinterest. “

We not only saw reduced build times but also huge efficiency wins.

They ramped up the cluster, and working with a team of four people, got the Jenkins Kubernetes cluster ready for production. “We still have our static Jenkins cluster,” says Benedict, “but on Kubernetes, we are doing similar builds, testing the entire pipeline, getting the artifact ready and just doing the comparison to see, how much time did it take to build over here. Is the SLA okay, is the artifact generated correct, are there issues there?”

“So far it’s been good,” he adds, “especially the elasticity around how we can configure our Jenkins workloads on Kubernetes shared cluster. That is the win we were pushing for.”

By the end of Q1 2018, the team successfully migrated Jenkins Master to run natively on Kubernetes and also collaborated on the Jenkins Kubernetes Plugin to manage the lifecycle of workers. “We’re currently building the entire Pinterest JVM stack (one of the larger monorepos at Pinterest which was recently bazelized) on this new cluster,” says Benedict. “At peak, we run thousands of pods on a few hundred nodes. Overall, by moving to Kubernetes the team was able to build on-demand scaling and new failover policies, in addition to simplifying the overall deployment and management of a complicated piece of infrastructure such as Jenkins. They not only saw reduced build times but also huge efficiency wins. For instance, the team reclaimed over 80 percent of capacity during non-peak hours. As a result, the Jenkins Kubernetes cluster now uses 30 percent less instance-hours per-day when compared to the previous static cluster.”

After years of being a cloud native pioneer, Pinterest is eager to share its ongoing journey. “We are in the position to run things at scale, in a public cloud environment, and test things out in way that a lot of people might not be able to do,” says Benedict. “We’re in a great position to contribute back some of those learnings.”

This is how Pinterest transformed their services by moving to kubernetes.

Hope you all liked this article 😌😌.